A latency engineering note from making a multi-model agentic vision pipeline respond at the rate a human operator can act on.

The default deployment of a vision model is a request-response system: an image arrives, the model returns a result, the caller does something with it. The architecture works because the human in the loop is not waiting for the result in real time. The inspection report is read tomorrow; the audit is done next week.

A growing share of the deployments we work on do not look like this. A drone hovers over a bridge and the operator needs the next flight instruction before it moves on. A factory line is feeding a camera at thirty frames per second and a defect missed by one frame is a defect missed forever. A field engineer points a phone at an equipment cabinet and expects the overlay to update as the camera does.

This note is about what changes when sub-second response is a requirement, and what the architectural cost is.

The latency budget, written down

Before any optimization is meaningful, the budget has to be explicit. For an interactive inspection pipeline a useful target is end-to-end response under one second, measured from the frame leaving the sensor to a first usable response being available to the operator. We decompose this budget roughly as:

- Capture and transport: 50–150 ms

- Specialist scouts (detection / segmentation / OCR / depth, run in parallel): 100–300 ms

- VLM analyst pass over scout outputs and retrieved context: 300–500 ms

- Judge consistency check and decision emission: 50–150 ms

Operating conditions for these numbers. The budget describes a streaming-first regime: the analyst reads structured scout outputs (not raw 4K imagery), prompts are kept short, output is consumed token-by-token, and the UI surfaces partial decisions before the full response completes. The hardware is a steady-state inference host with the analyst model resident in memory, system prompts prefix-cached, and the vision encoder pre-warmed. Under those conditions, what we measure on production hardware for a typical industrial scene sits inside the budget above.

This is not the only regime the Platform runs in. When the analyst has to do open-world reasoning on a full high-resolution image (as in our corner-case detection work), latency lives in the seconds, not the milliseconds. The two budgets coexist because they apply to different problems: this note is about the interactive inspection path, where a human is waiting on a frame-by-frame overlay.

The architectural decisions described below are the ones that kept us inside this budget. Every choice we made by default in the asynchronous batch case was the wrong one in the streaming case.

Streaming at every layer, not just at the boundaries

The single largest mistake in latency engineering is treating "streaming" as a property of the input. A pipeline that streams audio frames in but waits for the full utterance before transcribing, then waits for the full transcript before reasoning, then waits for the full reasoning trace before generating, has not reduced latency in any way the operator can perceive. The total time-to-output is the sum of the slowest layer's batch boundary plus the time of every layer behind it.

A genuinely low-latency pipeline streams at every layer:

- Capture streams to the specialists. Frames flow into the scout models as they arrive, not in batches of N. The scouts emit results per frame.

- Specialists stream to the analyst. Scout outputs are emitted as typed records the moment they are stable, not at the end of an inference batch. The VLM analyst begins reasoning over partial scout state.

- The analyst streams to the judge. The VLM's output is consumed token-by-token, and the judge layer starts its consistency check as soon as a citable claim is emitted, not after the full prose body is generated.

- The decision streams to the operator. The UI updates word-by-word and chip-by-chip, so the operator can act on the high-confidence parts of the decision while the rest is still being produced.

This is the same architectural principle that real-time speech-to-text systems [1] and streaming LLM serving stacks [2] rely on, applied to a multi-model vision pipeline. The properties that make it tractable (bounded per-token latency, streaming-friendly KV cache management, incremental result rendering) are not vision-specific; they come from the LLM serving literature and we inherit them by hosting the analyst on vLLM [3] and rendering through a server-sent-event channel.

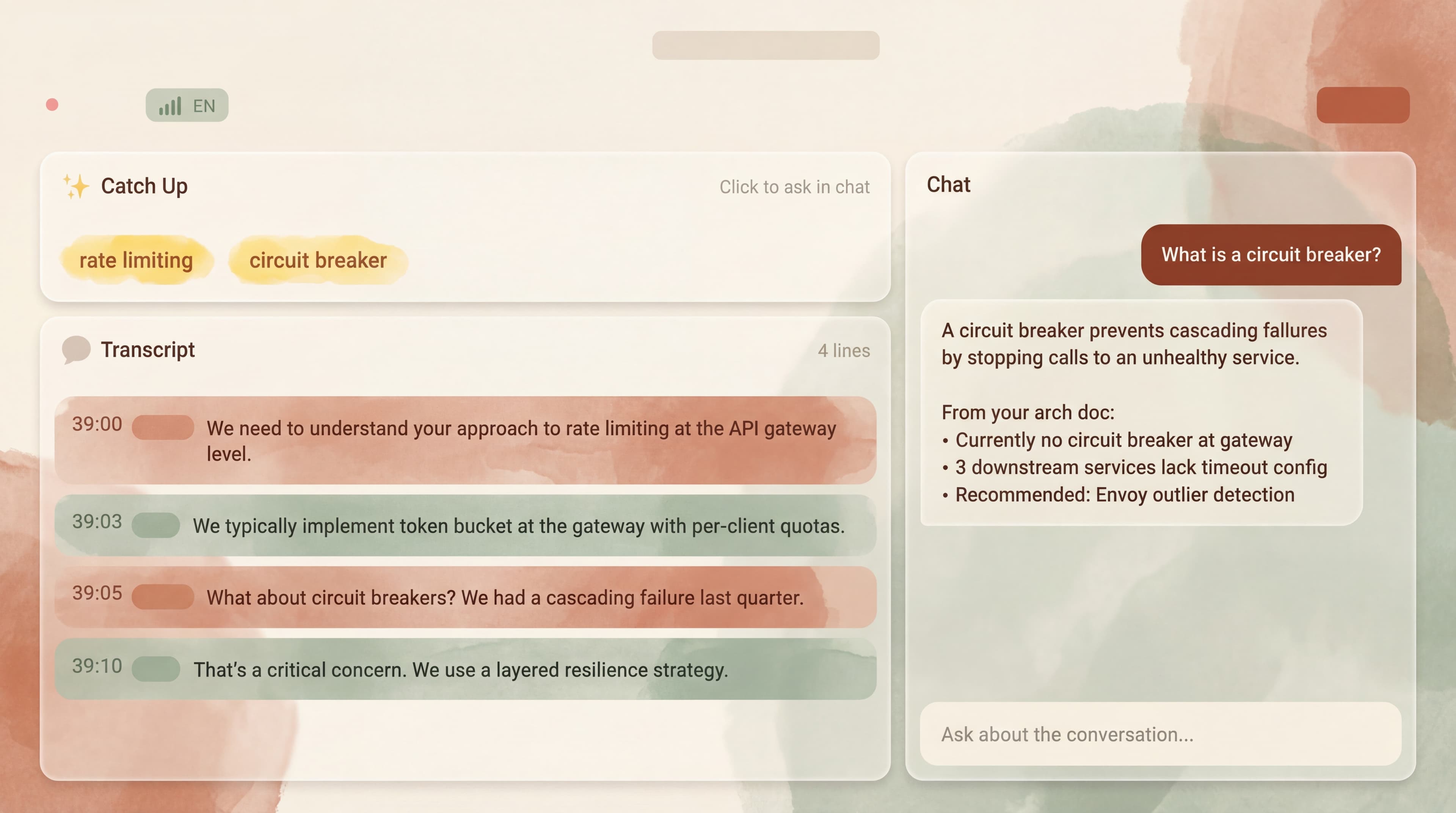

The three-layer streaming pipeline (capture, specialists/analyst, judge/render), with every layer operating on continuous data flows rather than request-response cycles.

Where latency actually accumulates

The intuition that "the model is the slow part" is almost always wrong in our setting. The slow parts, in order of typical contribution, are:

1. Frame transport. Moving a high-resolution image from a sensor to the inference host is non-trivial when the network is a 4G modem on a drone or a constrained industrial Ethernet. We push hardware-accelerated encoding to the edge and decode on the inference host; we tune the codec for the next frame's I-frame interval rather than the optimal compression ratio.

2. Model warm-up and load. The first inference call on a freshly loaded model is dramatically slower than steady-state. We keep specialists warm in memory, pin GPU contexts, and pre-allocate KV cache for the analyst. None of this is glamorous; all of it is essential.

3. Serial chains where a parallel chain would do. Detection, segmentation, OCR, and depth on the same frame have no data dependency between them. Running them serially is a mistake we have to actively prevent. The scheduler defaults toward batch efficiency, and we override that toward latency.

4. Synchronization barriers. A naive analyst-layer implementation waits for all scouts to finish before reasoning. A latency-aware implementation reasons over whichever scouts have completed, expresses the remaining ones as "pending evidence," and updates the decision when they arrive. The judge layer downstream is what makes this safe: it will not let a decision ship until the pending evidence has either arrived or been explicitly waived.

5. The model itself. Genuinely. Once the four items above are addressed, the actual GPU time is a meaningful fraction of the budget but rarely the dominant one.

Architectural trade-offs we have made on purpose

A few choices that look strange on paper and have paid off in production.

Pipeline over end-to-end. There is a recurring temptation to replace a multi-stage pipeline (capture → specialists → VLM → judge) with a single end-to-end model that consumes raw frames and emits decisions. End-to-end models have a latency advantage on paper. In practice we have found pipeline architectures easier to keep inside a tight latency budget, because each stage can be independently warmed, scheduled, and degraded. End-to-end models couple latency to the slowest possible end-to-end path. The same trade-off shows up in speech architectures (pipeline STT-to-LLM versus end-to-end speech-to-speech models such as Moshi [4] or GLM-4-Voice [5]), and the conclusions translate directly.

Bounded-context analyst. The VLM analyst runs with a tight, bounded context window, not the full available evidence. Every additional token of context costs latency, and the marginal token rarely changes the decision. We pre-rank scout outputs and retrieved context-pack chunks by relevance and truncate aggressively. The judge layer is what catches the cases where we truncated too aggressively.

Speculative decoding on the analyst. For the VLM's analyst output we use draft-then-verify decoding [6], which trades a small increase in per-call compute for a meaningful reduction in tail latency. The technique requires a draft model that is fast and roughly aligned with the target; the alignment quality matters more than the draft model's absolute capability.

Cancellable everything. Every stage of the pipeline accepts a cancellation token. When a newer frame supersedes the current one (the drone has moved, the camera has refocused), the older frame's downstream work is killed immediately rather than wasted. This is harder to retrofit than to design in, and we recommend designing it in.

What we do not do

A few things that are tempting and that we have either avoided or backed away from.

We do not use end-to-end speech-to-decision (or video-to-decision) models as the primary path. They will get there eventually; for now the pipeline architecture wins on observability and on the ability to swap individual components without changing the rest of the system. We keep the interfaces between stages clean enough that a future end-to-end model can be slotted in if and when it earns its place.

We do not over-fit to one hardware target. A latency budget that depends on a specific GPU SKU is a latency budget that expires when supply changes. We architect for streaming and parallelism first; we tune for the current hardware second.

We do not chase latency below the operator's reaction time. Once the response is well under the time it takes an operator to perceive and act on it, additional latency engineering produces diminishing returns and starts eating into other budgets: accuracy, audit completeness, context-pack breadth. The right question is not "can we make this faster" but "is faster what the operator needs."

Open problems

- Tail latency under load. Steady-state numbers are good. Under bursty load (multiple operators inspecting in parallel, multiple drones in flight), tail latency degrades faster than throughput-oriented serving stacks suggest. Per-tenant pre-warming and admission control help; we have not solved this in the general case.

- Cross-frame coherence. A pipeline that emits a decision per frame can produce visually flickering output even when each individual decision is correct. Smoothing across frames without introducing the lag that smoothing typically implies is an open design question.

- End-to-end models for the right sub-problems. There are sub-tasks (low-level perception, tightly coupled audio-visual fusion) where end-to-end models likely win. The interface between an end-to-end sub-component and a pipeline-style overall architecture is something we are actively prototyping.

References

- Google Cloud Speech-to-Text V2. Streaming recognition. cloud.google.com/speech-to-text/v2/docs

- OpenAI. Realtime API documentation. platform.openai.com/docs/guides/realtime

- Kwon, W. et al., Efficient Memory Management for Large Language Model Serving with PagedAttention. SOSP 2023. arxiv.org/abs/2309.06180

- Défossez, A. et al., Moshi: a speech-text foundation model for real-time dialogue. Kyutai. github.com/kyutai-labs/moshi

- GLM-4-Voice. End-to-end voice model. Zhipu AI. github.com/THUDM/GLM-4-Voice

- Leviathan, Y. et al., Fast Inference from Transformers via Speculative Decoding. Google Research. arxiv.org/abs/2211.17192