An engineering note on nav-autonomy-deploy, an open-source production deployment system for LiDAR-based autonomous mobile robots, built on top of CMU's Autonomous Exploration Development Environment.

The navigation algorithms inside autonomous mobile robots have, by most reasonable measures, been solved. FAST-LIO2, a tightly coupled LiDAR-inertial odometry framework from the University of Hong Kong, achieves 100 Hz odometry and mapping while remaining stable under rotations of 1,000°/s (Xu et al., IEEE TRO 2022). FAR Planner, a dynamic visibility graph-based route planner from Carnegie Mellon, won the IROS 2022 Best Student Paper Award for its ability to navigate both known and unknown environments in real time (Yang et al., IROS 2022). These two systems, together with terrain traversability analysis, collision avoidance, and waypoint following modules, form the core of CMU's Autonomous Exploration Development Environment, the navigation stack that powered the CMU-OSU team to a "Most Sectors Explored Award" at the DARPA Subterranean Challenge (Cao et al., ICRA 2022). All of this code is open-source.

CMU's development environment is explicitly designed for both system development and real robot deployment. The Go2 autonomy stack variant includes a complete real-robot launch path with SLAM, route planning, and collision avoidance running on Unitree Go2's onboard computer (Zhang, autonomy_stack_go2). But there is a meaningful gap between a research deployment workflow and a system that can be reproducibly deployed across different robot platforms, managed in production, and operated remotely. Cross-hardware abstraction, containerized environment isolation, map lifecycle management, runtime monitoring, single-command launches: this operational layer does not exist in the academic repositories, and it is not a small layer.

nav-autonomy-deploy is one attempt at that operational layer. It is an open-source deployment system that packages CMU's navigation stack into a containerized, hardware-abstracted, operations-ready form. A single docker compose up -d brings up autonomous navigation on any supported robot platform. This note walks through its architecture and the key engineering decisions behind it.

The navigation stack

The core navigation pipeline inside nav-autonomy-deploy runs five components inside a single Docker container. Understanding what each does, and where it comes from, is important context for the deployment engineering that follows.

FAST-LIO2 handles simultaneous localization and mapping. Unlike feature-based SLAM methods, FAST-LIO2 directly registers raw LiDAR points to the map using a tightly coupled iterated Kalman filter, eliminating the hand-engineered feature extraction step that makes many SLAM systems fragile when switching between LiDAR hardware. It maintains the map in an incremental k-d tree (ikd-Tree) that supports real-time point insertion and deletion. The result is a SLAM system that runs at up to 100 Hz on both Intel and ARM processors, handles rapid motion and cluttered environments, and works across spinning and solid-state LiDARs without reconfiguration (Xu et al., IEEE TRO 2022). In our stack, FAST-LIO2 replaces Point-LIO, which is the default in CMU's Go2 autonomy stack.

FAR Planner provides global route planning. It builds and dynamically maintains a reduced visibility graph: modeling obstacles as polygons, extracting edge points, and computing collision-free paths through the resulting graph. The key property is that it works in both known and unknown environments: given a prior map it plans optimal routes, and in unmapped space it attempts multiple routes while learning the environment layout during navigation (Yang et al., IROS 2022). The planner re-plans at a lower frequency and provides long-distance waypoints, which the local planner then executes.

The local planner from CMU's base autonomy system handles real-time collision avoidance. It pre-generates a library of motion primitives and, at runtime, eliminates those occluded by obstacles to select collision-free paths. It consumes terrain traversability maps from the terrain analysis module, which classifies the local terrain around the robot into traversable and non-traversable regions using the 3D point cloud. A waypoint follower connects the global and local layers, feeding FAR Planner's waypoints to the local planner in a closed control loop.

The entire pipeline, from raw LiDAR input to velocity commands, runs on a single thread of execution inside a ROS 2 workspace. CMU reports that the autonomy stack consumes less than 10% CPU overall, with local path planning using less than 1 ms and less than 1% CPU per cycle (cmu-exploration.com). These are important numbers: they mean the navigation algorithms are not the computational bottleneck. The bottleneck, as we will see, is everything else.

Containerized deployment

Containerizing a ROS 2 navigation stack is not like containerizing a web application. The standard assumptions of Docker (isolated networking, stateless processes, ephemeral filesystems) conflict with nearly every requirement of a real-time robotics system that must access physical hardware, discover peers over multicast, and persist maps across runs. Every design decision in our Docker configuration exists to resolve one of these conflicts.

Network mode. ROS 2's default middleware, Fast DDS, uses multicast UDP for node discovery. Docker's bridge networking does not forward multicast traffic between host and container networks. We use network_mode: host, which gives the container direct access to the host's network interfaces. This is a deliberate trade-off: we lose network isolation in exchange for DDS discovery that actually works. For multi-robot setups, we rely on ROS_DOMAIN_ID (default: 42) to partition ROS 2 communication domains instead.

Shared memory. Fast DDS uses shared memory as its default transport for intra-host communication. The default Docker shared memory allocation of 64 MB is insufficient for the volume of point cloud and occupancy grid data flowing between ROS 2 nodes. We allocate 8 GB (shm_size: '8gb'), which prevents silent message drops that are otherwise extremely difficult to diagnose. The system appears to run normally, but navigation decisions are based on stale or incomplete data.

Hardware access. The container requires direct access to the LiDAR (via Ethernet), motor controller (via serial device, typically /dev/ttyACM0), joystick input devices (/dev/input), and GPU (/dev/dri). We run in privileged mode and explicitly pass through device nodes. The NET_ADMIN and SYS_ADMIN capabilities allow the container to configure the LiDAR subnet at runtime (setting the computer's IP on the LiDAR network interface, configuring the gateway, and establishing the point-to-point connection) without requiring the operator to manually configure host networking before launch.

Hardware abstraction through environment variables. A single .env file is the only thing an operator needs to customize for a new robot. The primary interfaces cover robot platform selection (ROBOT_CONFIG_PATH: mecanum drive, Unitree Go2, or Unitree G1), LiDAR network configuration (LIDAR_INTERFACE, LIDAR_IP, LIDAR_COMPUTER_IP, LIDAR_GATEWAY), motor controller identification (MOTOR_SERIAL_DEVICE), Unitree-specific control parameters (USE_UNITREE, UNITREE_IP, UNITREE_CONN), GPU runtime selection (DOCKER_RUNTIME: runc or nvidia), and the critical MAP_PATH variable that switches the entire system between mapping mode (empty: the robot builds a new map) and localization mode (path to a PCD file: the robot localizes against an existing map). Additional variables control SLAM resolution, monitoring intervals, camera settings, and network bridge configuration. The same Docker image, iserverobotics/nav_autonomy, runs unchanged across all supported platforms; only the .env differs.

Dual ROS 2 distribution support. The system supports both ROS 2 Jazzy (default, Ubuntu 24.04) and Humble (Ubuntu 22.04). This matters in practice because many deployed robots (particularly Jetson-based systems) cannot easily upgrade their base OS. The run.sh fallback script accepts --humble or --jazzy flags and pulls the corresponding image tag, allowing teams to match their existing infrastructure without rebuilding.

Map lifecycle management

SLAM research focuses on building accurate maps. But in production, map building is a small fraction of the problem. The harder questions are: when should a map be rebuilt? If the robot has built multiple maps over several runs, which one should it use? When the robot restarts, how does it relocalize to an existing map? And once the robot is localized, how does it execute repeatable routes?

These questions are addressed by a separate container, iserve_map, which runs alongside the primary navigation container and manages the full map lifecycle through four coordinated components.

Map builder. The map_builder node constructs voxelized 3D point cloud maps from the odometry stream at a configurable resolution (default: 0.05 m per voxel, with up to 5 million points). It auto-saves the current map every 10 seconds to persistent storage. It also implements spatial deduplication of the odometry input, which is the part that matters for map quality: incoming poses are discretized into a 0.5 m spatial grid with 25° heading bins, and only poses that fall into new grid cells are used to update the map. This prevents the map from being dominated by regions where the robot spent the most time (e.g., waiting at a waypoint) and produces more spatially balanced coverage.

Map optimizer. When multiple maps exist (from different runs, different times of day, or different environmental conditions), the map_optimizer evaluates and ranks them using a weighted scoring function across three criteria: coverage (weight: 0.4), measuring how much of the operating area the map captures; recency (weight: 0.3), favoring maps that reflect the current state of the environment; and point count (weight: 0.3), as a proxy for map density and completeness. The optimizer exposes a ROS 2 service (/map_optimizer/evaluate_maps) that scores all maps in a given directory and returns a ranked recommendation.

Map switcher. The map_switcher acts on the optimizer's recommendation. It supports automatic switching between SLAM methods and triggers ICP-based relocalization when loading a new map. The relocalization service (/localizer/relocalize) takes a PCD path and an initial pose estimate, then uses iterative closest point matching to align the robot's current scan against the target map. This is the component that enables a robot to resume operation after a restart without manual intervention: it loads the recommended map, relocalizes, and hands control back to the navigation pipeline.

Pose repeater. Once the robot is localized, the pose_repeater enables repeatable autonomous routes. It records goal poses as the robot navigates (either via operator commands or autonomous exploration), then replays them on demand. The replay supports configurable loop counts: a repeat_count of 0 means infinite looping, enabling 24/7 patrol patterns. It subscribes to /goal_pose for recording and publishes to the same topic for replay, monitors /far_reach_goal_status to confirm waypoint arrival, and logs odometry at 20 Hz for post-run analysis. The result is a system where an operator can manually drive a patrol route once, then let the robot repeat it indefinitely.

Together, these four components form a closed loop: the robot builds maps, evaluates them, selects the best one, relocalizes against it, and executes repeatable missions. This is the layer that, in our experience, consumes the majority of the engineering effort in a production navigation deployment, and is almost entirely absent from academic navigation stacks.

Production monitoring and remote operation

A navigation system running on a robot in the field is not the same as one running in a lab. The operator may be in a different building, or a different country. The system must report its own health, and the operator must be able to intervene without physical access to the machine.

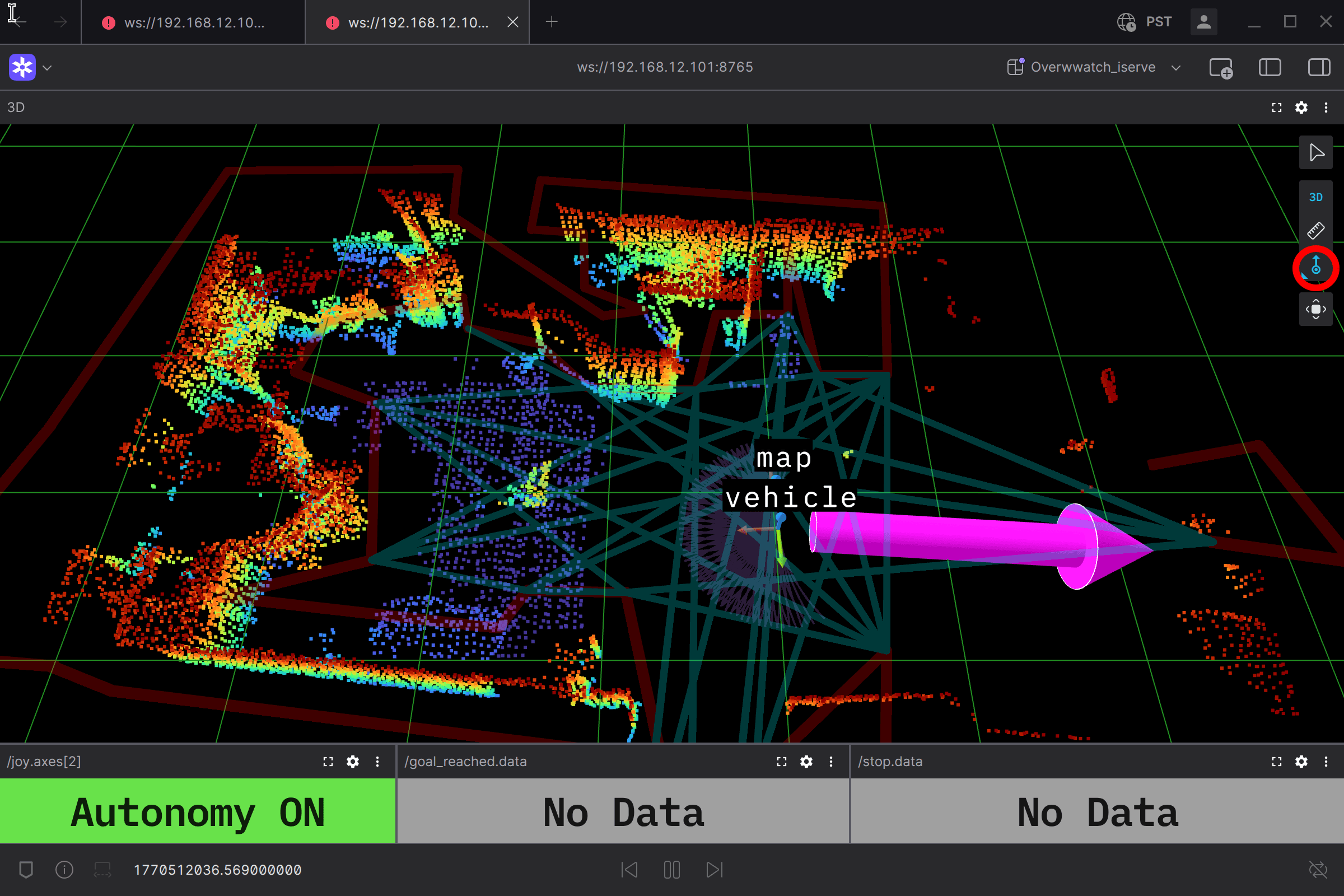

The default operator interface is Foxglove, connected via WebSocket on port 8765 (navigation container) and 8766 (map/ops container). Foxglove was chosen over RViz not as a replacement (RViz remains available and can be enabled via USE_RVIZ=true for local debugging), but because production operation requires a remote-first interface that works over any network connection without X11 forwarding or GPU access on the operator's machine.

The pre-configured Overwatch dashboard provides three real-time status indicators that reflect a specific operator model. The Autonomy indicator reads the joystick's mode-switch axis (/joy.axes[2]) and displays whether the robot is in autonomous or manual mode. The Goal Reached indicator shows whether the robot has arrived at its current target. The Stop indicator distinguishes three states: OK (normal operation), Speed Stop (the robot has slowed due to a nearby obstacle), and Full Stop (the robot has halted entirely). Below these indicators, a velocity plot shows cmd_vel linear and angular components over a 30-second window, giving the operator immediate feedback on whether the robot is moving as expected. The 3D panel displays the point cloud map, planned path, free paths, navigation boundary, and the visibility graph used by FAR Planner. The operator can publish a new goal pose directly by clicking on the map.

The iserve_map container also runs a resource monitor that samples the navigation container's CPU and memory usage via the Docker socket at a configurable interval (default: 1 second), writing time-series data to persistent storage. A log analyzer runs alongside it, parsing the navigation container's stdout/stderr and producing summary JSON files at regular intervals (default: 60 seconds). These are deliberately simple tools (not a full observability stack), but they provide the minimum viable telemetry for diagnosing issues in a deployed system: was the CPU pegged when navigation failed? Did a specific ROS node crash? When did the anomaly first appear in the logs?

What this stack points toward

The technical lineage of this system traces to underground cave exploration. CMU's Autonomous Exploration Development Environment was the navigation backbone of the CMU-OSU team in the DARPA Subterranean Challenge, where robots navigated GPS-denied, unstructured, and visually degraded environments such as tunnel networks, urban underground infrastructure, and natural cave systems (Cao et al., Science Robotics 2023). The challenge required robust navigation without GPS, in conditions where lighting was absent, terrain was uneven, and communication with the surface was intermittent at best.

A LiDAR-first architecture has a structural advantage in some of these conditions. LiDAR-based systems can retain useful geometric signal in low-light and certain visually degraded environments where RGB cameras fail entirely. However, this advantage has limits: dense smoke, heavy dust, and fog also degrade LiDAR returns, and real deployment in such conditions typically requires smoke filtration algorithms, multi-modal sensor fusion, or complementary modalities like thermal cameras and radar. The current system does not include smoke-robust perception, fire-hardening, or evaluation under degraded-visibility conditions. But the DARPA SubT lineage, combined with the containerized, hardware-abstracted deployment approach, makes this a natural direction for future development. The architectural decisions made for production deployment in controlled environments (map lifecycle management, remote monitoring, hardware abstraction) are the same decisions that would need to be in place before tackling more extreme scenarios: disaster response, post-earthquake search, smoke-filled industrial facilities.

Repository and what's next

The repository is at github.com/iServeRobotics/nav-autonomy-deploy. On a machine with Docker installed, docker compose up -d starts the navigation stack. Edit .env for your hardware, connect Foxglove to ws://<robot-ip>:8765, and send a goal pose. Contributions, issues, and forks are welcome.

The harder problems for outdoor autonomy (degraded-visibility perception, multi-modal sensor fusion, long-horizon operation in unstructured sites) live above this layer, not in it. The engineering layers documented here (containerization, map lifecycle, remote operation, monitoring) are mostly the prerequisites that have to be in place before any of those research questions become tractable on a real fleet.

References

- W. Xu, Y. Cai, D. He, J. Lin, F. Zhang. "FAST-LIO2: Fast Direct LiDAR-Inertial Odometry." IEEE Transactions on Robotics, 38(4), pp. 2053–2073, 2022.

- F. Yang, C. Cao, H. Zhu, J. Oh, J. Zhang. "FAR Planner: Fast, Attemptable Route Planner using Dynamic Visibility Update." IEEE/RSJ Intl. Conf. on Intelligent Robots and Systems (IROS), Kyoto, Japan, 2022. (Best Student Paper Award)

- C. Cao, H. Zhu, F. Yang, Y. Xia, H. Choset, J. Oh, J. Zhang. "Autonomous Exploration Development Environment and the Planning Algorithms." IEEE Intl. Conf. on Robotics and Automation (ICRA), Philadelphia, PA, 2022.

- C. Cao, H. Zhu, Z. Ren, H. Choset, J. Zhang. "Representation Granularity Enables Time-Efficient Autonomous Exploration in Large, Complex Worlds." Science Robotics, 8(80), 2023.

- Ji Zhang. CMU Autonomy Stack for Unitree Go2. github.com/jizhang-cmu/autonomy_stack_go2

- DARPA Subterranean Challenge. Final event, Louisville Mega Cavern, September 2021. CMU-OSU Team, "Most Sectors Explored Award."

- Autonomous Exploration Development Environment. cmu-exploration.com