I've spent recent months discussing a single question with founders, engineers, and operators: what should organizations look like in the age of AI? From those conversations , and from building this way myself , I've come to believe that organizational design is drastically underestimated as a competitive variable. My thesis is that the shape of the organization itself is the most underestimated , and most consequential , variable for next-generation companies in the AI era. Not just the models. Not just the tools. The org chart.

Below, I share three shifts in thinking that my team has adopted in practice, alongside the research that supports , and complicates , each one.

Shift 1: Assume AI can do it. Intervene only when it can't.

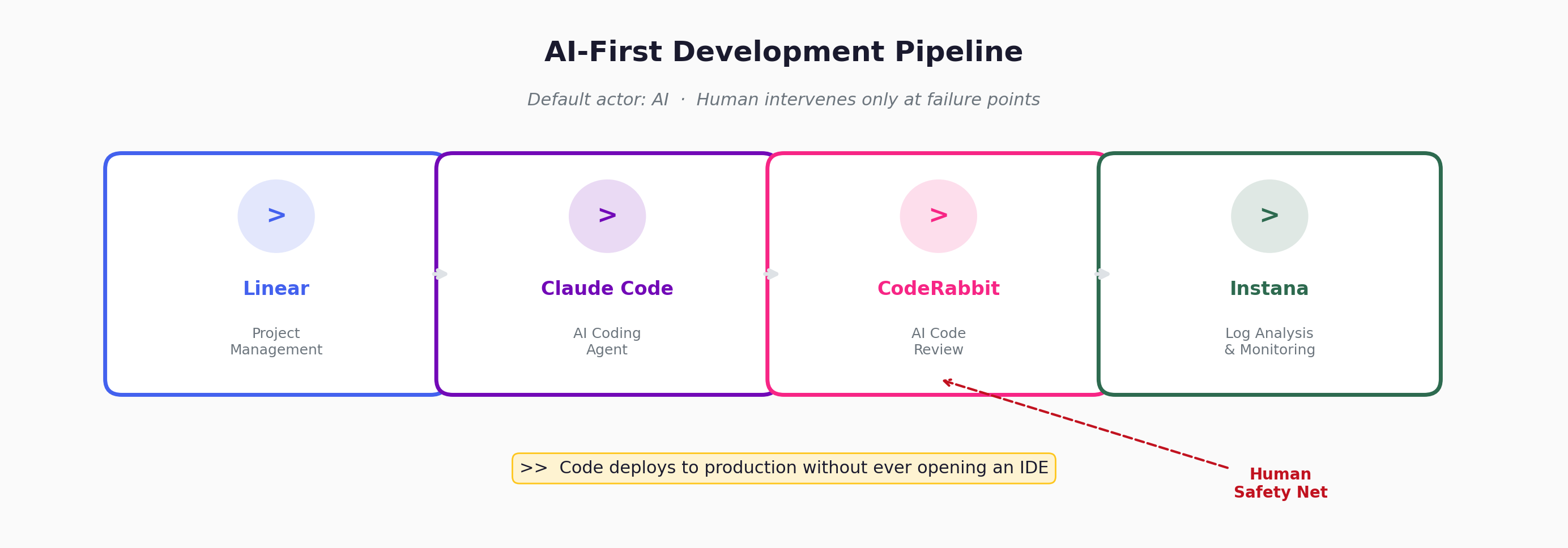

In our development workflow, the default actor is not a human , it is AI. The entire pipeline is designed around this assumption. Design documents, coding, code review, post-deployment monitoring: AI handles the first pass on all of it. Our toolchain integrates Linear for project management, AI coding agents like Claude Code for implementation, CodeRabbit for automated code review, and Instana for log analysis and monitoring. Under this architecture, AI executes and AI screens; humans escalate only when the AI layer flags an issue or when the change touches irreversibility boundaries. It is entirely possible for low-risk code to be deployed to production without a single engineer ever opening an IDE.

The last safety net in this pipeline is code review. And this is where the real question emerges: a traditional human code review might take one to two days. AI code review takes ten minutes. Are you willing to accept a small incremental risk in exchange for that speed? For a small company , especially for non-security-critical updates , the answer is almost always yes. If something breaks, in many cases you revert the commit. But "revert and done" only covers a subset of failures , database migrations, data corruption, external API calls with side effects, sent notifications, or payment transactions cannot be undone with a git revert. The AI-first pipeline requires deliberate guardrails at these irreversibility boundaries, not just fast rollback on the happy path.

"Let AI do what AI can do. When something goes wrong, a human fixes it." The adoption of AI-powered code review is a textbook case of this mental model shift. It is not about whether AI is perfect. It is about whether the cost of occasional failure is lower than the cost of perpetual human bottlenecks.

What the research says

Shopify has operationalized this principle at scale. CEO Tobi Lütke's April 2025 internal memo declared AI usage a baseline expectation, requiring teams to demonstrate why AI cannot do the work before requesting additional headcount. VP of Engineering Farhan Thawar ordered 3,000 Cursor licenses within months, and the fastest-growing user groups turned out to be not engineers, but support and revenue teams.

Klarna executed the most aggressive version of this strategy: according to CEO Sebastian Siemiatkowski's public statements, the company reduced headcount from roughly 5,500 to 3,400 , a 40% reduction , while revenue per employee surged from $400,000 to $700,000. However, by mid-2025, Siemiatkowski acknowledged the company had overcorrected , customer satisfaction metrics declined per Klarna's own reporting , and the company began rehiring human agents for complex interactions. The Klarna reversal is instructive: "AI-first" does not mean "AI-only." The fallback path , the human who intervenes when things break , must be robust and intentional, not an afterthought.

GitHub's randomized controlled trial found developers completed tasks 55.8% faster with Copilot. But the most rigorous study to date , METR's July 2025 trial of experienced open-source developers , found participants were actually 19% slower with AI tools, while believing they were 20% faster, a 43-percentage-point gap between perception and reality. CodeRabbit's own analysis found AI-generated code contained 1.7x more issues overall and 2.74x the rate of XSS vulnerabilities compared to human code.

These findings don't invalidate the AI-first workflow. They sharpen the design challenge: the operating model is "AI executes and screens, human escalates on exceptions" , not "AI does everything unsupervised." The human-in-the-loop is not decoration, but neither is it the default actor. It must be positioned at the right chokepoints , code review being the most critical one , and the organization must have a culture that treats "revert and fix" as a normal operating procedure, not a failure state.

Shift 2: Reduce coordination overhead. Own outcomes, not processes.

The second shift is moving from process-based division of labor to outcome-based division of labor. Each team member should be responsible not for an intermediate work step, but for the final deliverable itself. The less humans need to coordinate with each other, the lower the communication cost. And for tasks where AI has raised individual capacity enough to make decomposition optional, outcome ownership becomes a more efficient form of collaboration than process decomposition.

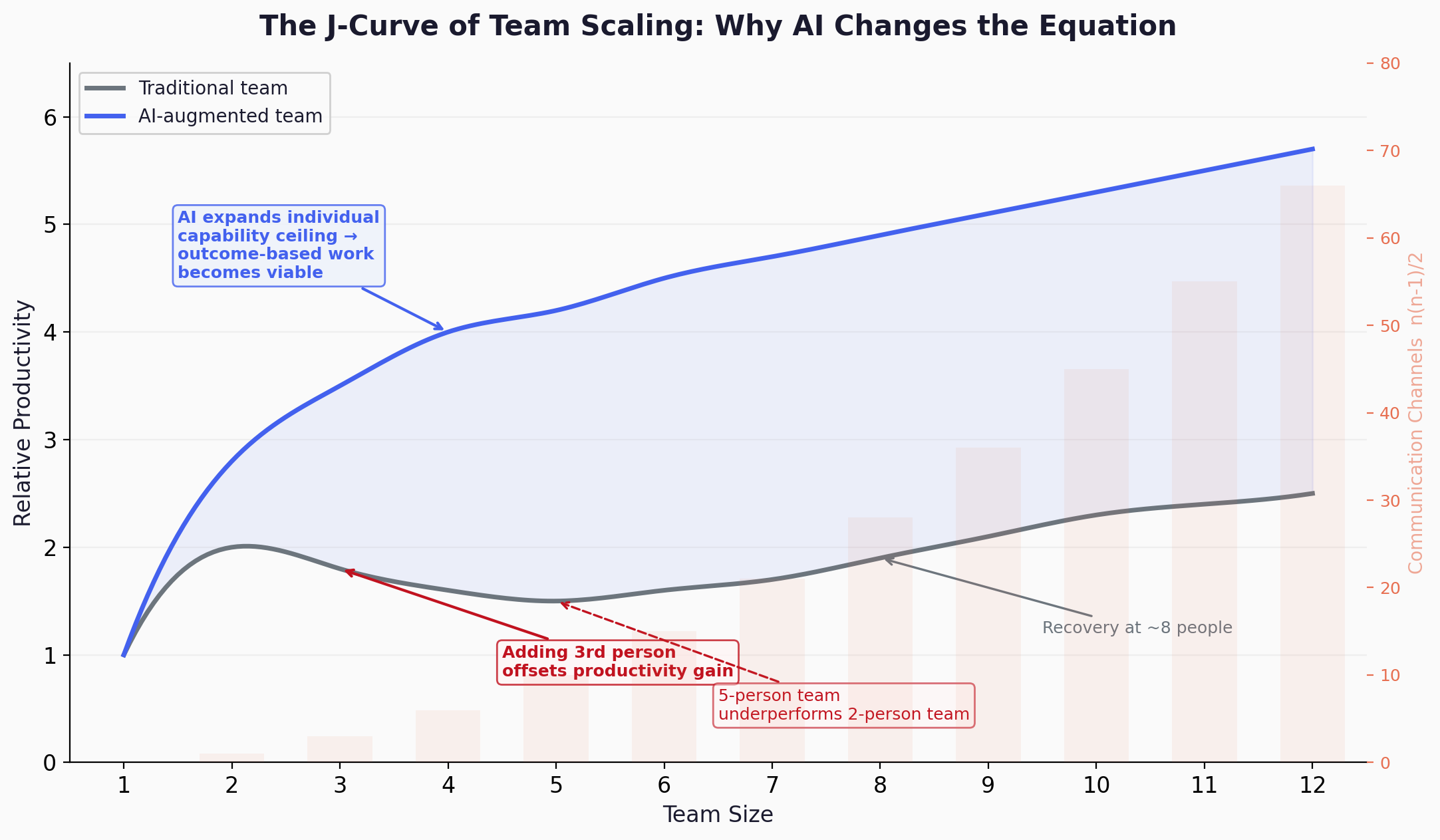

The reason process-based division of labor became the default was simple: projects grew too complex for any one person to handle alone, so work had to be decomposed across specialists, and coordination became the price of that decomposition. But anyone who has led a development team knows the nonlinearity of team scaling. When a team grows from two or three people to five, productivity does not increase linearly , in my experience, it temporarily drops. From what I've seen, a five-person team can produce less than the original two-person team before productivity recovers at larger sizes. The exact inflection points vary with task coupling, management quality, and domain , but the general shape is consistent: coordination cost grows faster than capacity in the early scaling phase. A two-person team operates with near-zero overhead. The moment a third person joins, the coordination burden can more than offset the additional capacity.

AI has now dramatically expanded the capability ceiling of each individual. The scope of work that once required decomposition across a team can increasingly be handled by one or two people with AI assistance. This is the missing link: Brooks and QSM proved that coordination costs punish team growth; AI changes the equation by raising individual capacity enough that many tasks no longer need to be decomposed in the first place. As a result, outcome-based division of labor , once aspirational , is becoming a viable engineering choice. Instead of decomposing work into small, interdependent, sequential tasks, we can define large, independently verifiable, parallelizable blocks as the unit of collaboration. Communication costs in development drop significantly , though not to zero, since cross-cutting concerns like shared APIs, data models, and deployment coordination still require human alignment , and high-efficiency parallel execution becomes far more practical.

What the research says

Fred Brooks formalized the coordination problem in 1975: communication channels in a team scale as n(n-1)/2, so a 10-person team has 45 channels compared to just 10 for a 5-person team. QSM's analysis of 1,060 software projects (drawn from a database of over 10,000) empirically confirmed that 3-7 person teams achieved the highest productivity, shortest schedules, and lowest costs. Their most striking finding: larger teams reduced schedules by roughly 24% for small projects and 6% for large ones, but at 3-4x the cost and 2-3x the defect density. This is the J-curve I described from firsthand experience, now validated at scale.

Microsoft Research found that developers spend approximately 11% of their time actually writing code , the rest is meetings, reviews, communication, and documentation. If AI primarily accelerates the coding slice, overall gains will be modest unless the surrounding coordination overhead is structurally removed. This supports the argument for outcome-based work: if the largest time sink is coordination rather than coding, then making individuals faster only helps if you also restructure how work is divided. Outcome ownership is one way to do that , not necessarily the only way, but a natural fit when AI raises individual capacity enough to make fine-grained decomposition less necessary.

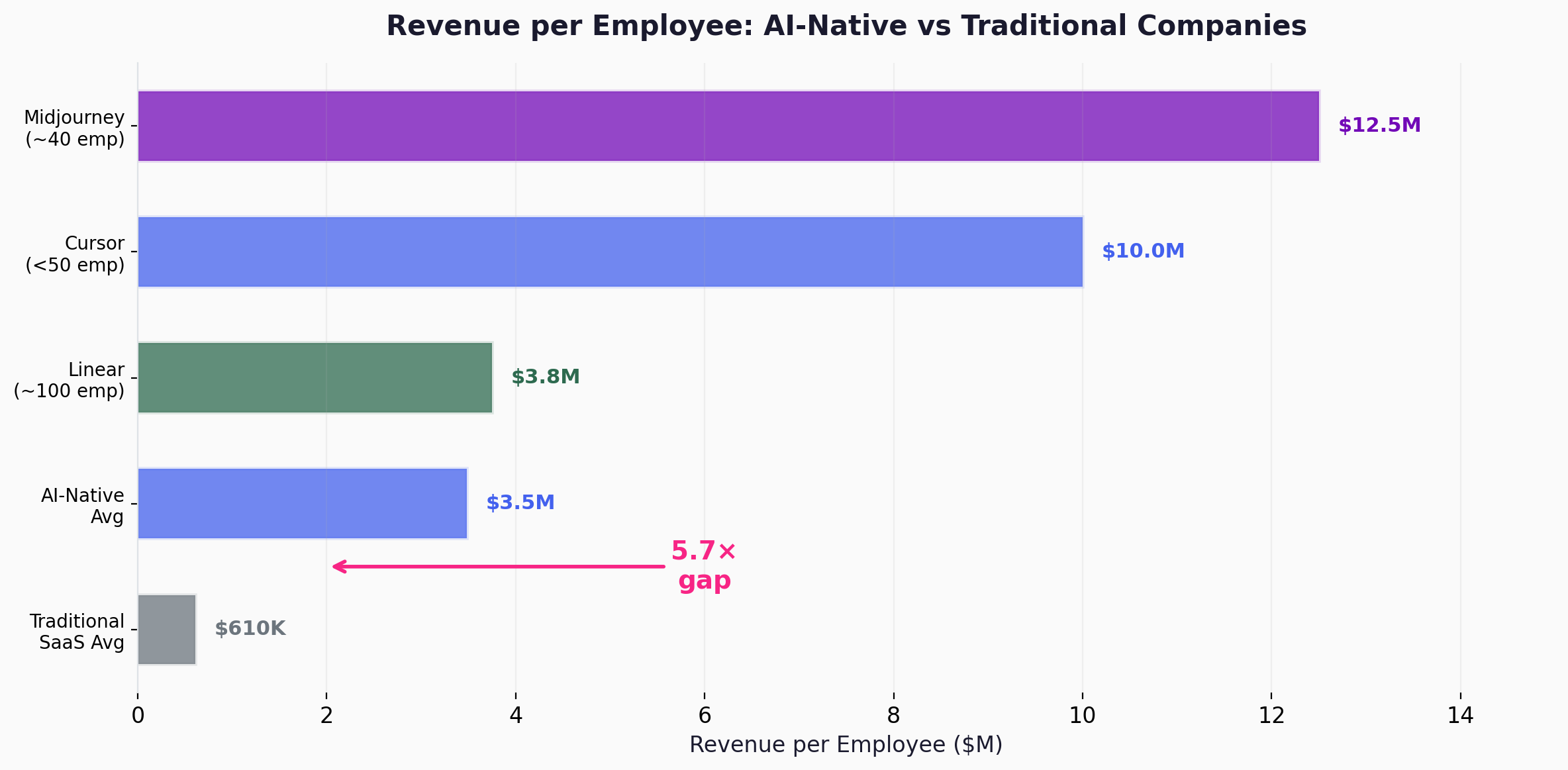

The evidence for AI-driven team compression is emerging from analyst estimates and industry rankings, though not yet from audited financials. Midjourney reportedly reached an estimated $200M ARR with only 11 employees, then nearly $500M with roughly 40. Cursor reached $500M ARR with fewer than 50 people , one of the fastest ramps in SaaS history, according to Bloomberg. Revenue per employee at top lean AI startups averages an estimated $3.48M versus $610K at traditional SaaS companies , a 5.7x efficiency gap, per Jeremiah Owyang's analysis. These numbers demonstrate extraordinary leverage, but they don't isolate the cause. Product-market fit, timing, the nature of the product (software with near-zero marginal cost), and founder quality all contribute. What I can say is that these companies share a structural trait , small teams with minimal handoff layers and high individual ownership , and that this trait is at least consistent with the outcome-based model. An important caveat: these are all AI-native or high-leverage startups. Whether the same ratios hold for larger, more regulated, or legacy-laden organizations is an open question , the evidence base is narrow, and I want to be honest about that.

A longitudinal study (2023,2025) tracking a software development organization found that AI accelerated individual tasks but left persistent collaboration issues , performance accountability and fragile communication , unresolved. This confirms the argument from a different angle: AI solves the execution bottleneck but not the coordination bottleneck. Organizations that fail to restructure around outcomes will find their communication costs unchanged, even as individual output increases.

Shift 3: From engineers to builders , compressing product and implementation into one role.

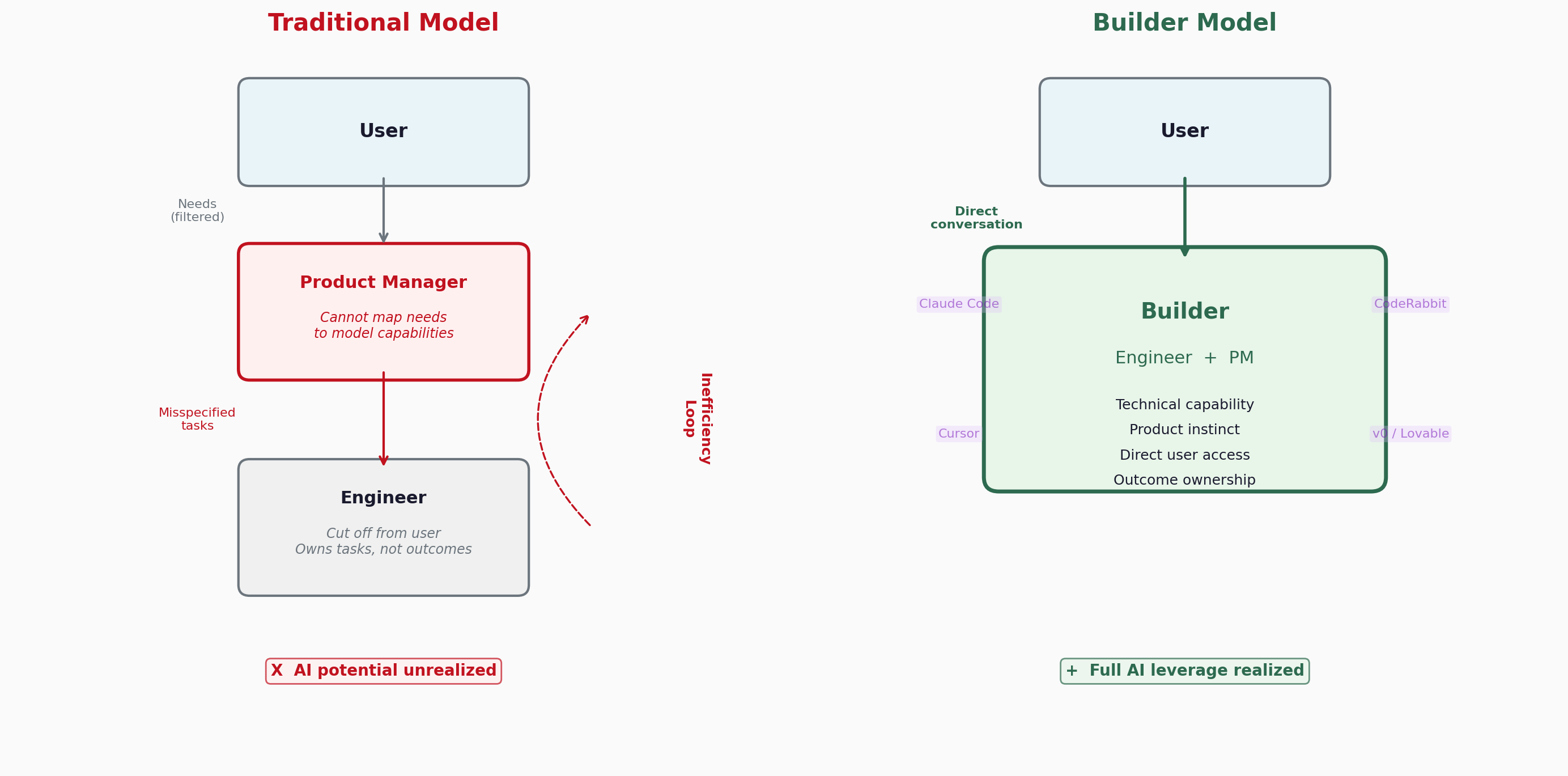

The logic is straightforward: the engineering team is the entity closest to the actual deliverable. The person who builds the product should also own the product outcome , and in an AI-augmented workflow, that person is increasingly the engineer. This is not a claim that engineers are inherently better product thinkers. It is a claim that collapsing implementation and product judgment into one role reduces the translation loss that separate roles introduce.

But this shift demands that engineers evolve. They need to become what I call builders: an engineer plus a PM. A builder combines technical capability with product instinct and can speak directly to users. Builders hear the user's voice without intermediate filters or distortion. They build in response to real needs and take responsibility for the result.

Why is this evolution necessary? Because engineers understand the boundaries of model capability better than anyone. When a user request comes in, an engineer can immediately assess whether it is feasible with current AI tools. This is the feasibility advantage , and it is real but incomplete. Knowing what can be built is not the same as knowing what should be built, for whom, and in what order. That requires product sense: user empathy, prioritization, and strategic judgment , skills that have traditionally lived with PMs. The builder thesis does not claim engineers already have these skills. It claims that compressing feasibility judgment and product judgment into the same person , and developing both , eliminates a translation layer that introduces more distortion than value. The translation layer that a non-technical PM provides , interpreting user needs into technical specifications , becomes much less necessary when the person building the product is trained to understand both sides.

To be clear: non-technical PMs can absolutely become builders. Learning models, tools, and the basics of implementation is 100% possible. The "builder" identity is not gated by a CS degree.

To be explicit: the builder model is not an anti-PM position. It is a claim about the unit of responsibility. The core PM functions , understanding users, setting priorities, defining what success looks like , don't disappear. They get compressed into the same person who implements, so that the feedback loop between "what should we build" and "what can we build" runs inside one head instead of across a handoff boundary.

But the current reality in many organizations is different. Non-technical PMs lead engineering teams. When the PM lacks a working model of what AI can and cannot do, the translation from user need to technical specification introduces distortion. The engineer is cut off from the user, responsible only for tasks the PM hands down. This creates an inefficiency loop: the PM misspecifies, the engineer implements the misspecification, the user is dissatisfied, and AI's full potential goes unrealized. Breaking this loop , by collapsing the PM and engineer into a single "builder" who owns the entire outcome , is the structural unlock.

Hiring and compensation must change accordingly

In my own practice, the best method for identifying builders is a one-to-two-day challenge project. The task is deliberately scoped larger than what could reasonably be completed in the given time. What I'm evaluating is not whether the candidate finishes, but how effectively they leverage AI to accelerate progress. This reveals the real skill: not coding ability in isolation, but the ability to orchestrate AI toward a product outcome.

Compensation frameworks also need rethinking. The traditional "fixed salary plus bonus" structure is designed for workers who own a process step. It doesn't fit builders who own an entire outcome. I'm currently experimenting with a model that sits somewhere between a contractor and a partner , a structure that aligns economic incentives with end-to-end responsibility. This is an area I'd like to explore and debate with others.

What the research says

Linear exemplifies the builder model at scale. The company, reportedly valued at $1.25B, operates with roughly 100 employees and only 2 PMs. Teams of 2-4 assemble around projects and dissolve upon completion. No OKRs, no A/B tests, no story points. CEO Karri Saarinen has said he doesn't understand why massive teams became the norm , in his experience, small teams always delivered better quality and faster. (Specific headcount and retention figures are drawn from press interviews rather than official disclosures.)

Andrew Ng offered the most provocative quantitative framing: the traditional PM-to-engineer ratio of roughly 1:4-6 may invert. He cited a team proposing one PM to 0.5 engineers , a ratio that would have been absurd a year earlier. His logic: engineers are 10x faster with AI, but PMs haven't sped up proportionally , making PMs the new bottleneck. Paradoxically, he also argues this could increase demand for people who can define what to build. This aligns precisely with the builder thesis: the valuable skill isn't coding or spec-writing in isolation, but the fusion of the two.

The "product engineer" title has roughly doubled from ~2% to ~5% of Hacker News "Who's Hiring" listings between 2022 and 2024. Claire Vo's "Product Management is Dead" talk at the Lenny & Friends Summit argued AI will collapse the talent stack , the traditional triad of PM, engineering lead, and designer may evolve into a single "AI-powered Triple Threat."

The organizational flattening is already visible at large companies. Gartner predicts that by 2026, 20% of organizations will use AI to flatten their structure, eliminating more than half of current middle management positions. Amazon CEO Andy Jassy mandated increasing the individual-contributor-to-manager ratio by at least 15%.

On hiring: the landscape is still in flux. According to Karat, almost two-thirds of companies still prohibit AI use in interviews, and fewer than 30% are updating their assessments for AI. But early movers are signaling a direction: Meta is piloting interviews where candidates use AI assistants throughout coding tests. The shift I described , challenge projects that test AI fluency rather than raw coding ability , is not yet industry standard, but the trajectory is clear.

On compensation: a16z's December 2024 analysis notes that AI-native companies are moving toward outcome-based pricing, which will eventually cascade into compensation structures. PwC's 2025 data shows AI-skilled workers already command a 56% wage premium , double the prior year. The contractor-partner hybrid I'm exploring is one response to this shift, but the broader point is that compensation models designed for process workers will not retain builders.

The harder question

The three shifts described above , AI-first workflows, outcome-based collaboration, and the engineer-to-builder evolution , are mutually reinforcing, though I have not proven they are strictly interdependent. Each can be adopted partially or independently. But taken together, they point in the same direction: the organization's shape determines how much of AI's potential value it can actually capture. You can have the best models in the world and still capture less of that value if your org chart routes decisions through coordination layers that AI has already made less necessary. The evidence base for this claim comes primarily from AI-native startups and forward-leaning tech companies , whether it generalizes to enterprises with legacy constraints, regulatory overhead, or thousands of employees is the harder test.

McKinsey's 2025 State of AI survey tested 25 organizational attributes and found that "redesign of workflows" had the single biggest effect on whether companies achieved EBIT impact from generative AI. Yet only 21% of organizations have fundamentally redesigned even some of their workflows, and BCG found that 60% of companies generate no material value from AI despite significant investment.

The most durable insight comes from Kasparov's 2005 freestyle chess tournament: the winner was not a grandmaster with a powerful computer, but two amateurs with three weak computers and a superior process. Kasparov's observation , "weak human + machine + better process was superior to a strong computer alone and, more remarkably, superior to a strong human + machine + inferior process" , remains the foundational principle.

Process design beats raw capability. Organizational design is often the binding constraint , not the only factor, but the most underestimated one.

The question I'm still working through , and invite others to work through with me , is what the steady-state version of this looks like. The builder model, the outcome-based team, the AI-first pipeline: these are working for us now, at a small company moving fast. Whether they hold at 50 people, or 500, is the experiment still running.