Based on interviews with anime production studios and AI startups in Japan, enriched with industry data and academic research.

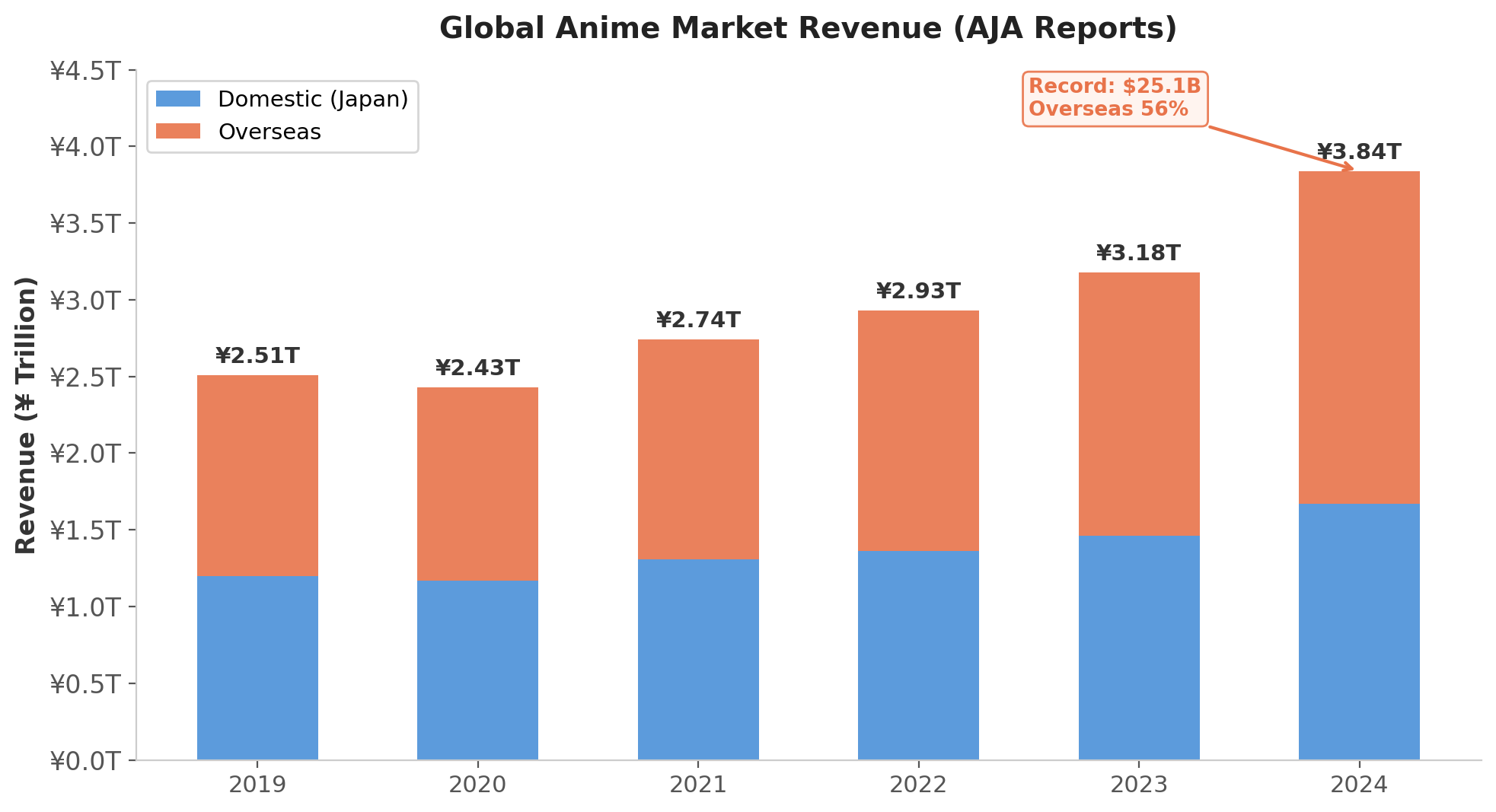

Anime is experiencing an unprecedented global boom. The Association of Japanese Animations (AJA) reported that the global anime market hit a record ¥3.84 trillion (~$25.1 billion) in 2024, a 14.8% year-over-year increase, with overseas revenue now accounting for 56% of the total. Crunchyroll surpassed 15 million paid subscribers in 2024, triple its 2021 figure; Netflix reported that over half its global members watched anime last year. By every measure, this is a market growing at extraordinary speed—industry projections converge on a CAGR of 9.11% through 2030, with some analysts at Parrot Analytics and Bernstein projecting the international anime streaming market alone will triple to $12.5 billion.

Figure 1: Global anime market revenue from 2019 to 2024 (AJA Annual Reports). Overseas revenue surpassed domestic for the first time in 2023 and now accounts for 56% of the total.

Figure 1: Global anime market revenue from 2019 to 2024 (AJA Annual Reports). Overseas revenue surpassed domestic for the first time in 2023 and now accounts for 56% of the total.

Yet if you wanted to produce an anime series today, simply booking a production slot at a reputable studio would require a two- to three-year wait. Building a series from scratch—from concept to broadcast—takes four to five years. The bottleneck appears to be supply: there are simply not enough skilled animators. But as the data below will show, the constraint is double-layered. The industry also suffers from broken economics—depressed wages, thin margins, and a business model that distributes booming revenues unevenly—which suppresses the very workforce expansion that could close the supply gap. Understanding this dual constraint is essential context for why the industry is turning to AI.

Anime is a relatively young medium with a distinctive aesthetic system. The generations who grew up with anime have never stopped watching—many fans born in the 1970s remain active viewers today—while each successive generation adds new audiences. This compound growth in viewership, combined with streaming platforms' global reach, has created a supply-demand gap that the industry's current production model cannot close.

Why anime production is more labor-intensive than you think

The supply side faces enormous structural challenges. Anime production—whether 2D hand-drawn or 3D CG—is extraordinarily labor-intensive, far more so than most outsiders realize. Next time you watch an anime episode, pay attention to the ending credits. The staff list for a single 24-minute episode is staggeringly long. Multiple outsourcing companies, each employing hundreds of people, handle specific production stages. A typical episode requires approximately 3,000 hand-drawn frames across roughly 300 individual cuts, produced by over 100 people across two months. High-action episodes can require 5,000 to 10,000 drawings; the 2009 film Redline consumed 100,000 entirely hand-drawn frames over seven years.

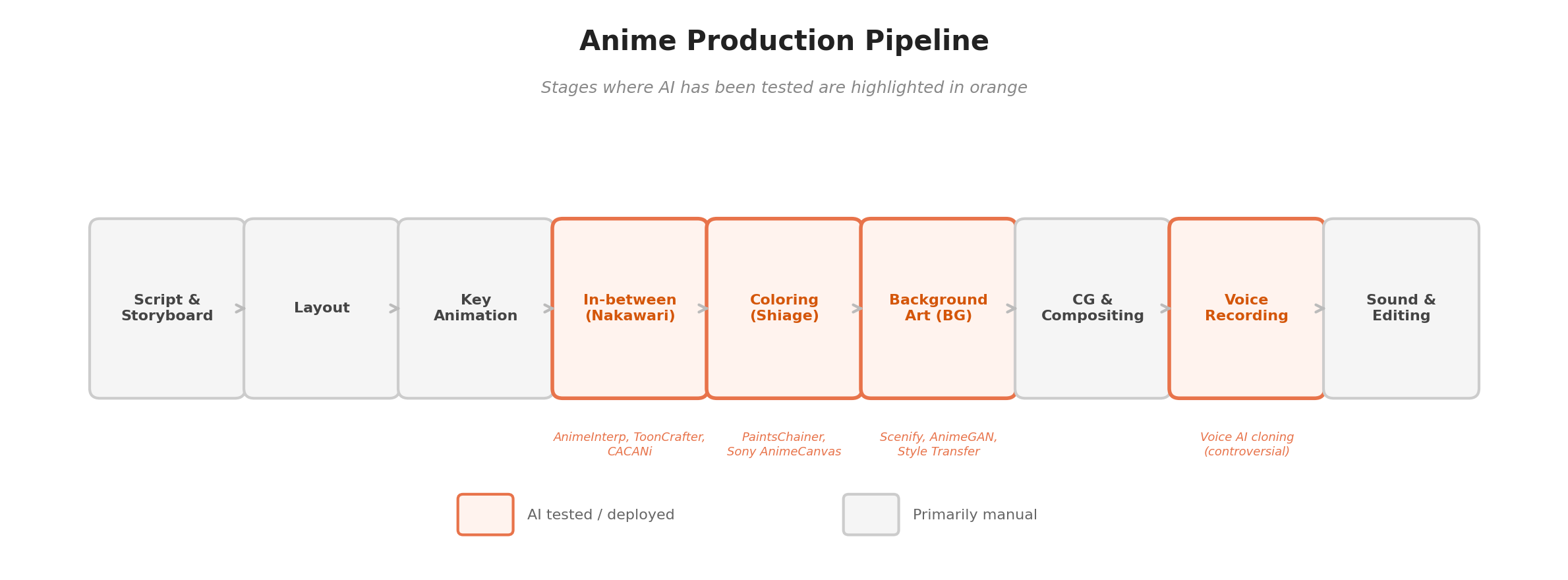

Figure 2: The anime production pipeline. Stages where AI has been tested or deployed are highlighted in orange. Most stages remain primarily manual.

Figure 2: The anime production pipeline. Stages where AI has been tested or deployed are highlighted in orange. Most stages remain primarily manual.

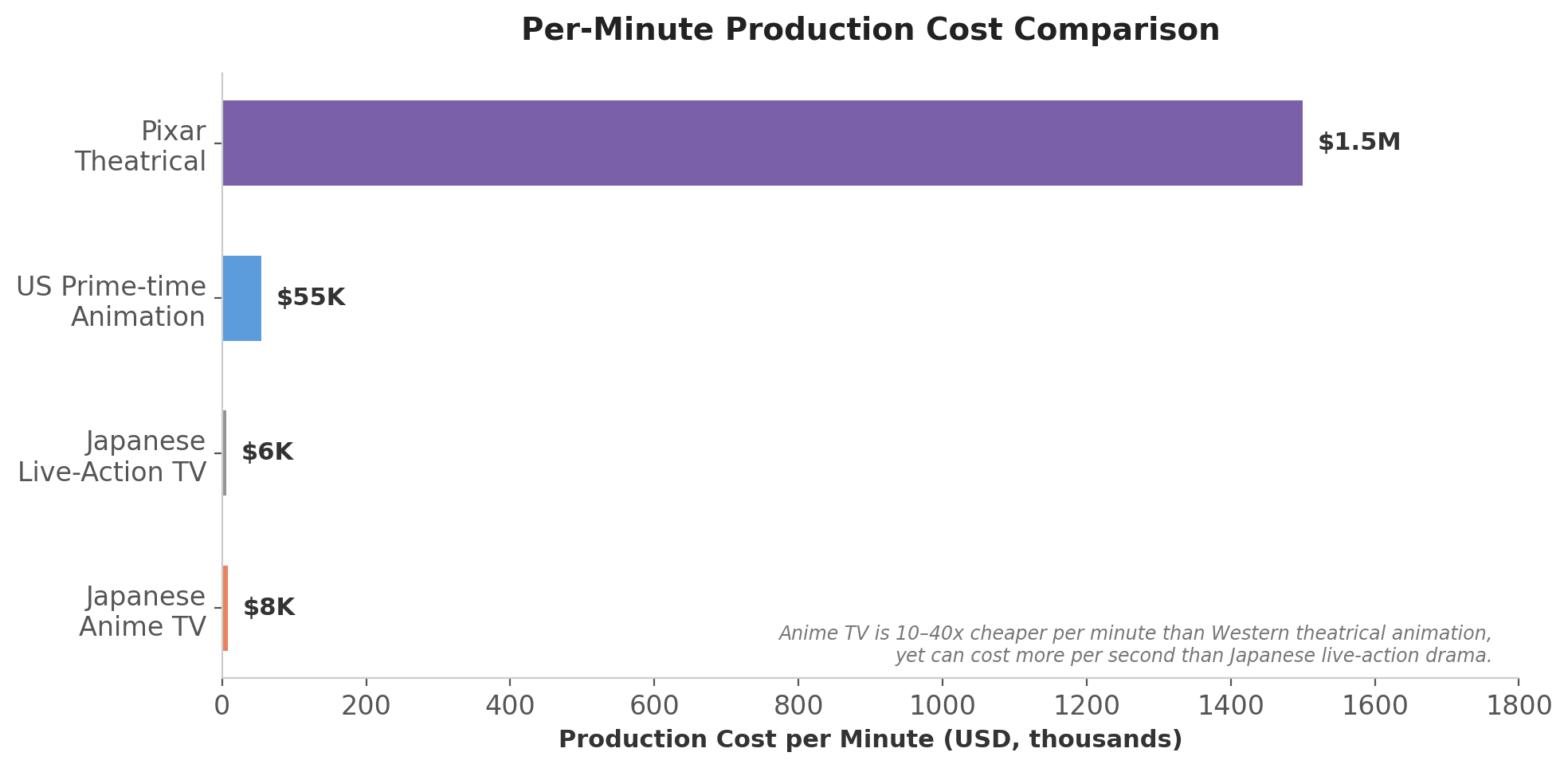

The degree of industrialization in the anime industry was lower than I expected. Even a few seconds of character movement demands 15 to 30 hand-drawn frames. One episode can easily require thousands of original drawings. This leads to a counterintuitive economic reality: the per-second production cost of anime can exceed that of live-action drama. Industry data from Weekly Toyo Keizai prices a single TV anime episode at ¥31.2 million (~$224,000), while high-end productions like Demon Slayer and Jujutsu Kaisen can cost significantly more. For comparison, anime TV runs roughly $5,700–$10,000 per minute, which may sound cheap until you consider that live-action Japanese TV dramas—which employ real actors on real sets—can cost less per minute of screen time.

Figure 3: Per-minute production cost comparison across media types. While anime is 10–40x cheaper than Western theatrical animation, its per-second cost can exceed that of Japanese live-action TV drama—a counterintuitive finding driven by the sheer volume of hand-drawn artwork required.

Figure 3: Per-minute production cost comparison across media types. While anime is 10–40x cheaper than Western theatrical animation, its per-second cost can exceed that of Japanese live-action TV drama—a counterintuitive finding driven by the sheer volume of hand-drawn artwork required.

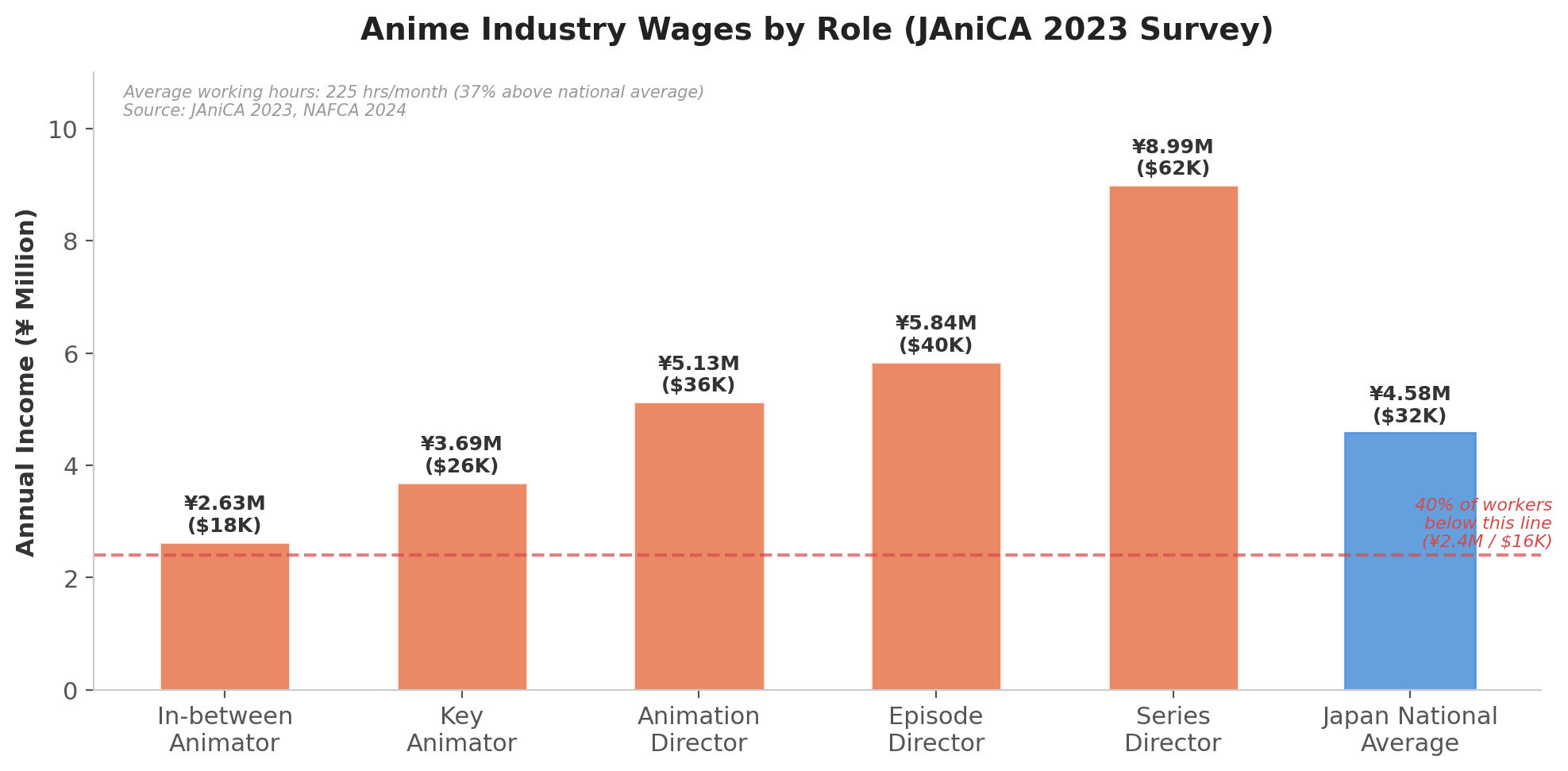

The only reason the industry can operate at these price points despite such labor intensity is severely depressed wages. The 2023 JAniCA (Japan Animation Creators Association) survey found that in-between animators earn an average of just ¥2.63 million ($18,200) annually, and workers aged 20–24 earn ¥1.97 million ($14,660), with a full-sample average of approximately 198 working hours per month. A separate 2024 NAFCA (New Anime and Film Creator Association) survey reported even higher figures among younger animators: a median of 225 hours per month (average 219 hours), 37% above Japan's national average.

In 2023, a UN Working Group on Business and Human Rights noted in its end-of-mission statement that anime industry workers face low wages, excessive working hours, and unfair contractual practices that leave creators vulnerable to exploitation. Teikoku Databank found that anime studio closures have risen for three consecutive years, and among prime and gross contractors (元請・グロス請), only 40% reported revenue gains in 2024—a figure even lower than the 42.9% across all anime-related companies. The industry's own trade body describes it as "busy but unprofitable."

Figure 4: Annual income by role in the anime industry (JAniCA 2023 Survey). In-between animators—the most common entry-level role—earn well below the national average. The dashed line marks the threshold below which approximately 40% of all anime workers fall.

Figure 4: Annual income by role in the anime industry (JAniCA 2023 Survey). In-between animators—the most common entry-level role—earn well below the national average. The dashed line marks the threshold below which approximately 40% of all anime workers fall.

Three approaches to AI in anime production

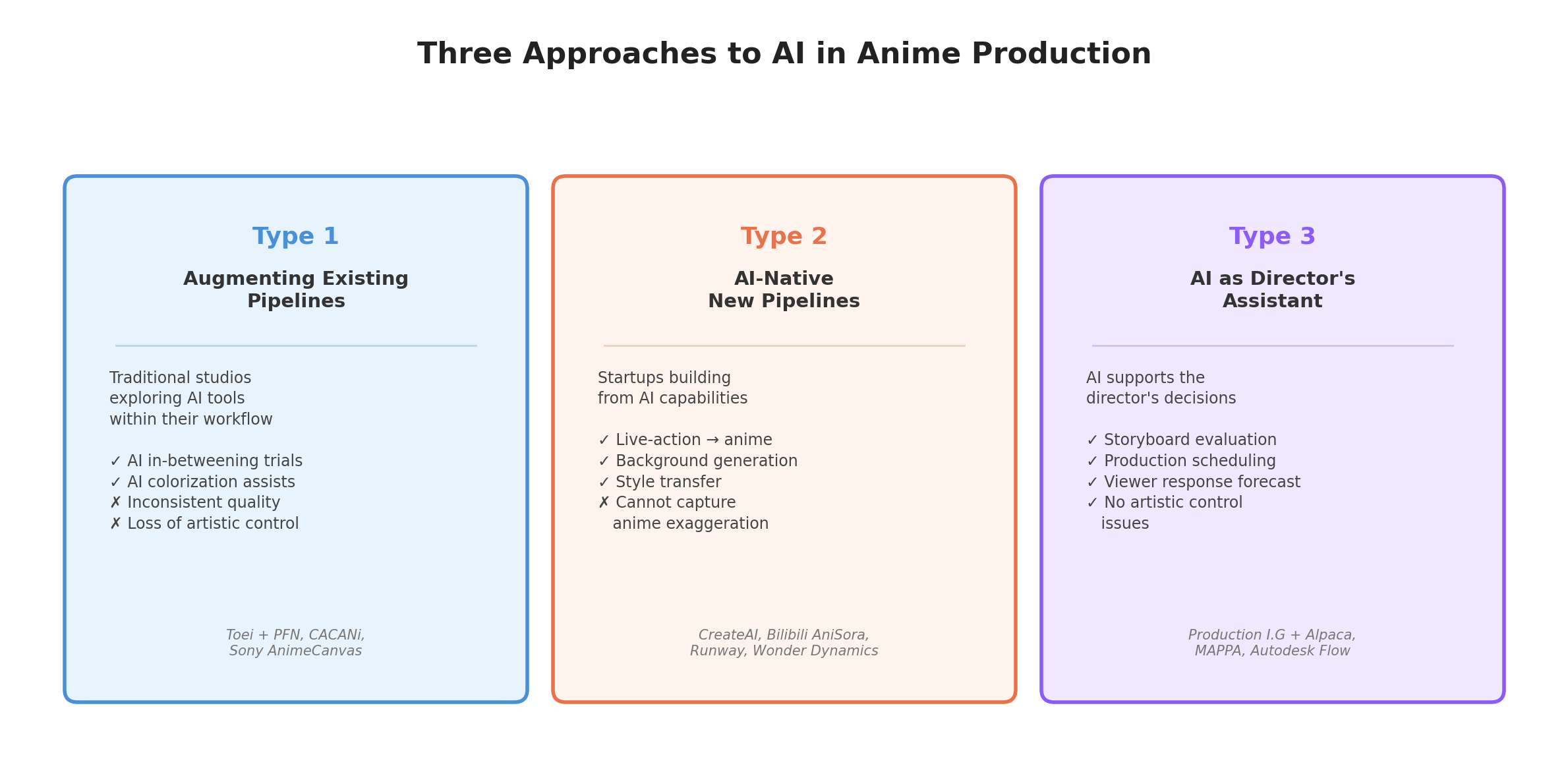

The interviews and cases I gathered cluster into three broad approaches to integrating AI—generative, analytical, and coordinative—into the production process. These approaches are not mutually exclusive; a single studio may pursue more than one simultaneously, and the boundaries between them are porous. But the distinction is useful because each approach encounters fundamentally different obstacles and opportunities.

Figure 5: A framework for understanding three distinct approaches to AI in anime production, based on field interviews with studios and startups in Japan.

Figure 5: A framework for understanding three distinct approaches to AI in anime production, based on field interviews with studios and startups in Japan.

Type 1: Traditional studios augmenting their existing pipeline

The first category comprises established anime studios—the names many fans would recognize—that are actively exploring how to integrate AI into their existing production workflows. Every studio I spoke with was interested in AI, driven by the same supply-side pressure: there simply are not enough skilled animators to meet demand, and the backlog of manga and light novel adaptations waiting for production slots continues to grow. Several studios described schedule slippage and project delays extending into 2026, citing workforce constraints as the primary cause.

However, AI is an extremely sensitive topic among creators, and these studios rarely publicize their experiments. The gap between private exploration and public posture is wide. When Toei Animation announced an investment in AI firm Preferred Networks on April 30, 2025, the backlash that erupted in May was so severe that the company edited its financial presentation to remove AI demonstration images and issued a clarification stating it was "not using AI technology" in any current productions.

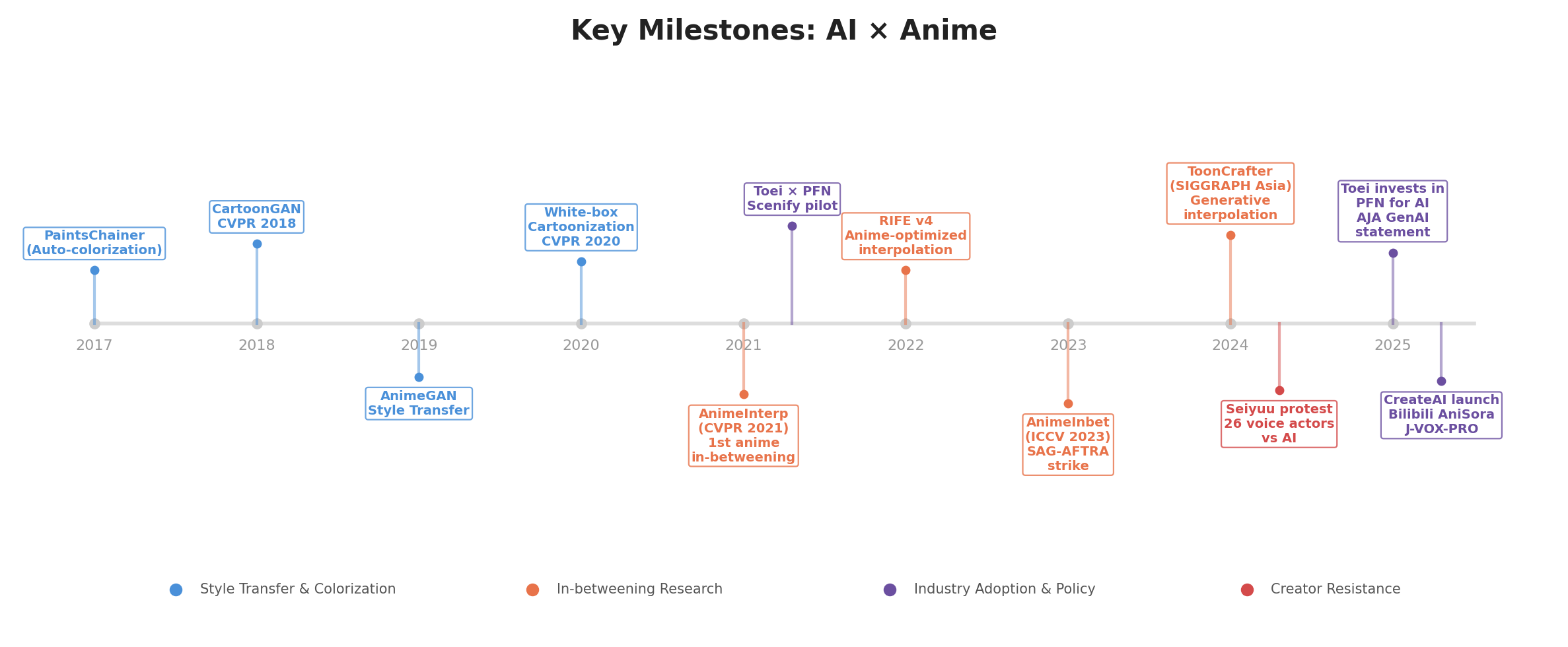

The most intuitive application these studios are exploring is AI-generated in-betweens (nakawari). In anime production, key animation and in-betweening are separate roles: experienced key animators (genga-man) draw the critical poses—a character picking up a coffee cup, bringing it to their lips, taking a sip—while junior animators fill in the frames between those poses. Because in-betweening is technically less demanding than key animation, it has attracted enormous research attention. Numerous academic papers and startups have demonstrated AI in-betweening capabilities, from the foundational AnimeInterp (CVPR 2021) to ToonCrafter (SIGGRAPH Asia 2024, Tencent AI Lab), a generative diffusion approach that can handle non-linear motion and dis-occlusion.

But reality has proven harsh. At the studios I visited, AI-assisted in-betweening had been tested but yielded inconsistent results. Because the output quality was unstable, every AI-generated in-between required rigorous checking and correction, and the time spent on quality control effectively negated any time savings. One key animator put it plainly:

"I delegate in-betweens to ten interns. I can tell each of them exactly what's wrong and how to fix it. Each submission gets better. But with AI, there's no guarantee I'll get what I need, and sometimes I simply can't move the production forward."

This points to a deeper issue. Most anime directors maintain intense aesthetic convictions and demand 100% controllability from their tools. At this stage, AI cannot offer that level of fine-grained control. The technology struggles with core elements of anime's visual grammar: timing charts (non-uniform frame spacing for ease-in/ease-out), smear frames (deliberately distorted drawings conveying speed), impact holds (a head snapping 90° between frames for dramatic emphasis), and secondary motion (a sleeve or hair following movement with physics-based delay). AI treats smears as artifacts to correct and fills dramatic holds with meaningless intermediate motion. As a result, AI in-betweening in its current state is limited to studios operating under extreme budget constraints where some loss of artistic control is an acceptable tradeoff.

Commercial in-betweening tools do exist. CACANi, developed at Nanyang Technological University, has been used by David Production for Cells at Work, reporting up to 70% time reduction—but primarily for effects animation (fire, smoke, explosions) and simple movements, not complex character acting. Sony's AnimeCanvas, still in development, reduced coloring workflow clicks by approximately 15% in trials at A-1 Pictures and CloverWorks. These are meaningful but narrow improvements, not transformations of the core production process.

Type 2: Generative AI experiments—from style transfer to pipeline rethinking

The second approach is defined not by company type but by ambition: rather than inserting AI into a single existing step (as in Type 1), these efforts experiment with generative models as a primary production method—sometimes rethinking entire pipeline stages, sometimes discovering why that does not yet work. This group includes AI-native startups, but also incumbent studios and production committees testing fundamentally different methods. The common question is: "starting from what generative AI can do today, what kind of anime can we create?"

One common approach is live-action to anime conversion: filming real actors, processing the footage through motion capture and neural style transfer, and outputting anime-style visuals. Tools like Luma AI, DomoAI, and Komiko offer one-click anime conversion with specific style presets. CreateAI (formerly TuSimple, the autonomous trucking company, which pivoted to AI animation in December 2024) launched Animon.ai, an anime-specific video generation platform. Bilibili released AniSora, an open-source anime video generation model trained on over 10 million clips.

However, the limitations of this approach are immediately apparent in the output. Anime's visual power comes from deliberate exaggeration—that quintessentially anime expression where the mouth stretches impossibly wide and nearly touches the eyes in a grin, the way a character's head distorts during a scream, the exaggerated bounce in a running cycle. These are not naturalistic movements that motion capture can record from a human actor. The artistic vocabulary of anime is defined by its departure from physical reality, and style transfer from live-action footage inherently pushes toward realism, which is the opposite of what anime requires. Extensive manual reworking of motion capture data is often necessary, sometimes taking longer than keyframe animation from scratch.

Where generative models do work convincingly today is static background generation. Backgrounds are single images requiring no temporal consistency, they rely on environmental patterns that diffusion models handle well, and they tolerate minor inconsistencies far more readily than character art. Toei Animation and Preferred Networks developed Scenify, which converts photographs into anime-style backgrounds and reduced pre-processing time to one-sixth of conventional methods. (Scenify could equally be classified as a Type 1 augmentation—Toei is a traditional studio inserting a generative tool into one pipeline step. Its placement here reflects the tool's generative nature rather than the studio's organizational posture, illustrating why the three approaches overlap in practice.)

Beyond backgrounds, some productions have experimented with broader AI-assisted workflows. The Spring 2025 anime Twins HinaHima used AI-assisted production across over 95% of its cuts—spanning not just backgrounds but multiple pipeline stages—with human artists providing final corrections and touch-ups on every frame. While this represents one of the most extensive deployments of AI in a broadcast anime to date, it remains an outlier rather than an industry norm.

But it is important to keep perspective on what this means economically. Background art, while visually important, does not represent a dominant share of overall production costs. The exception that proves the rule is Makoto Shinkai, whose films are famous for their extraordinary background art—the photorealistic, emotionally charged environments of Your Name and Suzume. Studios like Kusanagi, which painted the backgrounds for Your Name, employ artists with decades of training in atmospheric perspective and architectural detail. AI models like AnimeGANv2 are explicitly trained on Shinkai's films and can approximate his atmospheric quality and color palettes for single images. But Shinkai's backgrounds embed narrative meaning—specific light, weather, and environmental details that reflect character psychology. Replicating this intentionality consistently across an entire production remains beyond current AI capabilities. And for studios not operating at that rarified level of background quality, the cost savings from AI backgrounds, while real, are modest relative to the overall production budget.

Type 3: AI as the director's assistant

The third and perhaps most intriguing category uses AI not for generating images or animation itself, but as an evaluation and coordination layer assisting the director. In anime production, an enormous volume of decisions converge on the director: evaluating storyboards, reviewing key animation cuts, approving color palettes, assessing pacing, coordinating between departments. The director is the ultimate bottleneck not because they are slow, but because every creative judgment passes through them.

Several companies I interviewed are deploying analytical and predictive AI to accelerate this evaluation process. The AI does not draw a single frame; instead, it helps the director assess and prioritize the work of human artists more efficiently. Examples include AI tools that analyze storyboard compositions against historical audience-engagement data to flag potential pacing issues, and post-production pipelines that automate routine calculations for lip-sync timing and motion blur parameters—tasks that are technically part of the production pipeline but do not involve generating artwork, freeing the director from repetitive technical review. (Note: specific studio implementations in this category were shared under anonymity agreements and cannot be attributed here.)

This is a genuinely interesting application of AI, one that largely avoids the most acute controllability problems of generative image creation. The AI is not generating visual content; it is helping the human creator evaluate and manage the creative output of other humans. That said, any tool that flags pacing issues or automates timing parameters inevitably shapes creative outcomes to some degree—the control problem is attenuated, not eliminated. Still, compared to Types 1 and 2, the artistic risk is substantially lower: the director retains final judgment, and the AI's role is to reduce the time spent on evaluations that are routine or data-amenable, freeing them for decisions that require genuine artistic vision.

The audio frontier: voice AI has already crossed the threshold

Everything discussed above concerns the visual side of anime production. But there is another domain where AI has progressed much further: audio.

Voice AI is far more mature than its video counterpart. In certain commercial contexts—navigation prompts, virtual assistants, short-form content—AI-generated voice can be difficult for lay listeners to distinguish from a human performance. (Music AI is also advancing rapidly but faces a different set of challenges and is beyond the scope of this article.) And critically, the central debate around voice AI has already shifted from technology to economics—from "can AI replicate a human voice?" (it can) to "what is the economic and ethical relationship between AI voice technology and the human performers whose livelihoods depend on voice work?"

Recently, many prominent voice actors have spoken out publicly against AI. In October 2024, 26 leading Japanese voice actors—including Ryūsei Nakao (Frieza), Kōichi Yamadera (Spike Spiegel), Yūki Kaji (Eren Yeager), and Romi Park (Edward Elric)—launched the "No More Unauthorized Generative AI" campaign. Nakao opened their announcement video saying: "Someone was selling my voice without permission. I was shocked. Our voices are our livelihoods. They are our lives." A Japan Actors Union survey found 267 voice actors' voices had been used without authorization on platforms like YouTube and TikTok.

In the United States, SAG-AFTRA's 118-day strike in 2023 secured explicit protections: studios cannot create or reuse a digital replica of a performer's voice without informed consent and compensation for each specific use. In Japan, the legal framework remains more ambiguous. Article 30-4 of the Copyright Act permits the use of copyrighted works for purposes that do not involve "enjoying" the expressive content itself, which has been interpreted to cover many AI training scenarios. However, the Agency for Cultural Affairs has clarified two important limitations: the use must genuinely be non-enjoyment-purpose, and it must not "unreasonably prejudice the interests of the copyright holder." The practical boundaries of these exceptions remain contested, but the provision is widely regarded as making Japan comparatively AI-friendly for training purposes.

Notably, the voice actors' campaign is not categorically anti-AI. Their statement includes: "New technology can bring great benefits to humankind... We would like to consider ways of using this technology together." Yūki Kaji's Soyogi Fractal project offers creators a way to use his voice officially. Aoni Production partnered with CoeFont to offer AI-replicated voices of Masako Nozawa (Goku) for non-performance applications like virtual assistants. In November 2025, the Japan Actors Union launched J-VOX-PRO, an official voice database with digital watermarking and voiceprint recognition—an attempt to build institutional infrastructure for consent-based AI voice use.

Voice AI has already moved beyond the stage of being merely a "tool." It is now a force that directly threatens the economic foundation of an entire profession. This raises an uncomfortable question that the anime industry cannot avoid much longer:

Will visual AI eventually become the same kind of existential threat to animators that voice AI has already become to voice actors?

The technology trajectories suggest it may follow a similar institutional path, though the timeline and outcome are far from certain. Video generation models are improving rapidly: Runway, now valued at $3 billion, can generate anime-style sequences from text prompts; ToonCrafter can interpolate between keyframes with generative diffusion; Bilibili's AniSora was trained on 10 million anime clips. Today, these tools produce output that falls far short of broadcast quality. As this article has argued, the controllability, temporal consistency, and anime-grammar challenges facing visual AI are qualitatively different from—and arguably harder than—those that voice AI faced. Voice is a single-channel, temporally continuous signal; animation is a multi-layered, deliberately discontinuous art form.

But here is the critical point: the governance questions do not wait for the technology to mature. Even while visual AI remains inadequate for broadcast-quality production, the upstream issues—rights over training data, consent frameworks for artistic styles, contractual protections for animators whose work feeds AI models, and workflow governance for studios already experimenting behind closed doors—are already live. The voice AI experience demonstrates that by the time the technology crosses the quality threshold, the institutional vacuum is already entrenched and far harder to remedy. Nevertheless, the voice AI precedent is instructive: it too was dismissed as inadequate until it suddenly was not. The anime industry would be wise to study how the voice acting domain navigated this transition—the organized resistance, the consent frameworks, the institutional responses—because visual AI may eventually demand similar answers, and the window for proactive preparation is narrower than it appears.

Figure 6: Key milestones in AI × Anime, from early style transfer research to today's industry-wide debates. The trajectory shows academic research (blue, orange) increasingly intersecting with industry adoption (purple) and creator resistance (red).

Figure 6: Key milestones in AI × Anime, from early style transfer research to today's industry-wide debates. The trajectory shows academic research (blue, orange) increasingly intersecting with industry adoption (purple) and creator resistance (red).

This report is based on field interviews conducted with anime production studios and AI startups in Japan during 2024–2025, supplemented by industry data from the Association of Japanese Animations (AJA), Teikoku Databank, JAniCA, and published academic research. Studio-level details have been anonymized where requested.